Measuring What Matters In Document Understanding

Resume parsing sits at the intersection of computer vision, natural language processing, and real-world hiring workflows. Yet, despite its importance, evaluation remains fragmented.

Document Understanding systems are expected to extract structured, reliable information from visually complex inputs. Resumes push these systems to their limits: inconsistent layouts, dense semantics, and limited high-quality annotated datasets.

The result is a systemic gap, no standardized way to measure performance across the dimensions that actually matter in production.

At Factored, engineers from R&D in the Machine Learning Center of Excellence set out to close that gap. The goal was a framework grounded in real-world deployment: measurable, reproducible, and aligned with how organizations evaluate talent at scale.

A Multi-Dimensional Evaluation Framework

Most benchmarks isolate a single task. Real systems don’t have that luxury.

To ensure Production-Grade reliability, we designed a multi-stage evaluation framework that measures resume parsing across four critical dimensions:

- Layout analysis: Can the model correctly detect and segment document structure?

- Reading order: Does it reconstruct information in the correct sequence?

- Text extraction: How accurately does it transcribe content?

- Semantic understanding: Can it correctly interpret and classify entities?

This approach reflects how resume parsing systems are actually used: end-to-end, not in isolation.

Building Evaluation Rigor

To enable meaningful evaluation, we built a dataset designed for both scale and precision.

- 112 real-world resumes, converted into 218 images

- Coverage across Machine Learning, Data Science, Software Engineering, and Data Engineering roles

- Bilingual dataset (English and Spanish)

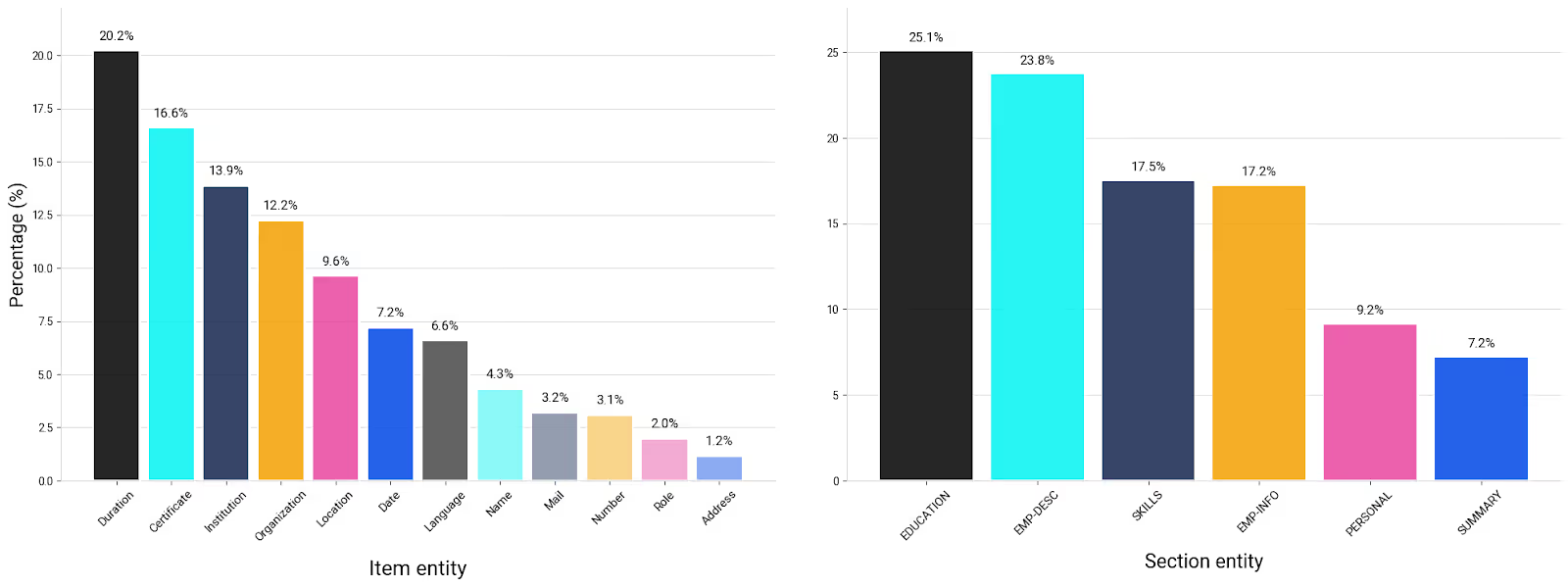

Annotations were structured at two levels:

- Item-level entities: names, dates, roles

- Section-level entities: education, skills, experience

Using a BIO tagging scheme and reading order metadata, the dataset includes 12,000+ bounding boxes and transcriptions.

Annotation was accelerated through an OCR-assisted pipeline using Label Studio, combining automation with human validation to ensure quality and consistency.

This is not synthetic benchmarking. It reflects the complexity of real hiring pipelines.

We Measured 4 Evaluation Dimensions

Each evaluation dimension was paired with metrics aligned to its function:

- Layout analysis: Mean Average Precision (IoU at 0.5 and 0.75)

- Text extraction: Word Error Rate (WER), Character Error Rate (CER)

- Reading order: BLEU, Normalized Levenshtein Distance (NDLD)

- Semantic understanding: BERTScore

Evaluating all four dimensions simultaneously is uncommon, but necessary. In production, failure in any one layer degrades the entire system.

Measured Results: A Syntax Of Authority

Our evaluation reveals that current "Best-in-Class" claims are often missing the technical receipts required for $1M+ AI deployments.

1. OCR is stronger than expected

DotsOCR achieved a BLEU score of 95.22 and WER of 0.08, outperforming all systems in text extraction and reading order.

The implication: traditional components still set a high baseline.

2. Context changes everything for VLMs

Vision-Language Models consistently improved when provided full document context instead of isolated pages. End-to-end reasoning, not page-level inference, is where these models differentiate.

3. Smaller models can compete

Ministral-3:8b delivered competitive BERTScores against GPT-4.1-mini and Gemini 2.5, with faster inference and fewer hallucinations.

Efficiency is no longer a tradeoff, it’s a design choice.

4. Metrics are not neutral

BERTScore, while useful, does not fully capture structural correctness.

Models can score high while misplacing fields, or score lower despite correct structure.

AI Teams Need Standardized Evaluation

Resume parsing is a systems problem tied to business outcomes.

Without standardized evaluation:

- Model comparisons are unreliable

- Improvements are hard to quantify

- Production risks go undetected

For organizations building AI-driven hiring or document pipelines, this creates hidden failure points.

At Factored, we’ve seen that the difference between a working model and a production-ready system is not accuracy alone, it’s evaluation discipline.

Toward a new standard in document understanding

This work reflects a broader shift: from model-centric thinking to system-level rigor.

As AI systems become embedded in critical workflows, evaluation must evolve alongside them, multi-dimensional, context-aware, and grounded in real use cases.

That’s where leading AI organizations are already heading.

And it’s where we continue to focus on building the frameworks that make them reliable at scale.

-

This framework was developed by Rafael Mosquera and Elizabeth Granda from Factored’s R&D Team with expertise in AI & Machine Learning. Their work reflects a consistent principle: combine research-grade rigor with production reality so AI systems deliver impact.