Engineering Real-World Evaluation of Medical LLMs

At Factored, we build AI systems that operate in production environments where reliability, evaluation, and governance determine impact.

In collaboration with researchers from the University of Oxford, NHS institutions, and MLCommons, Factored engineers Rafael Mosquera and Sara Hincapié contributed to a large-scale, preregistered randomized study published in Nature Medicine evaluating whether large language models (LLMs) can reliably assist the general public in medical self-diagnosis.

The question was straightforward:

Do high benchmark scores translate into safe real-world use?

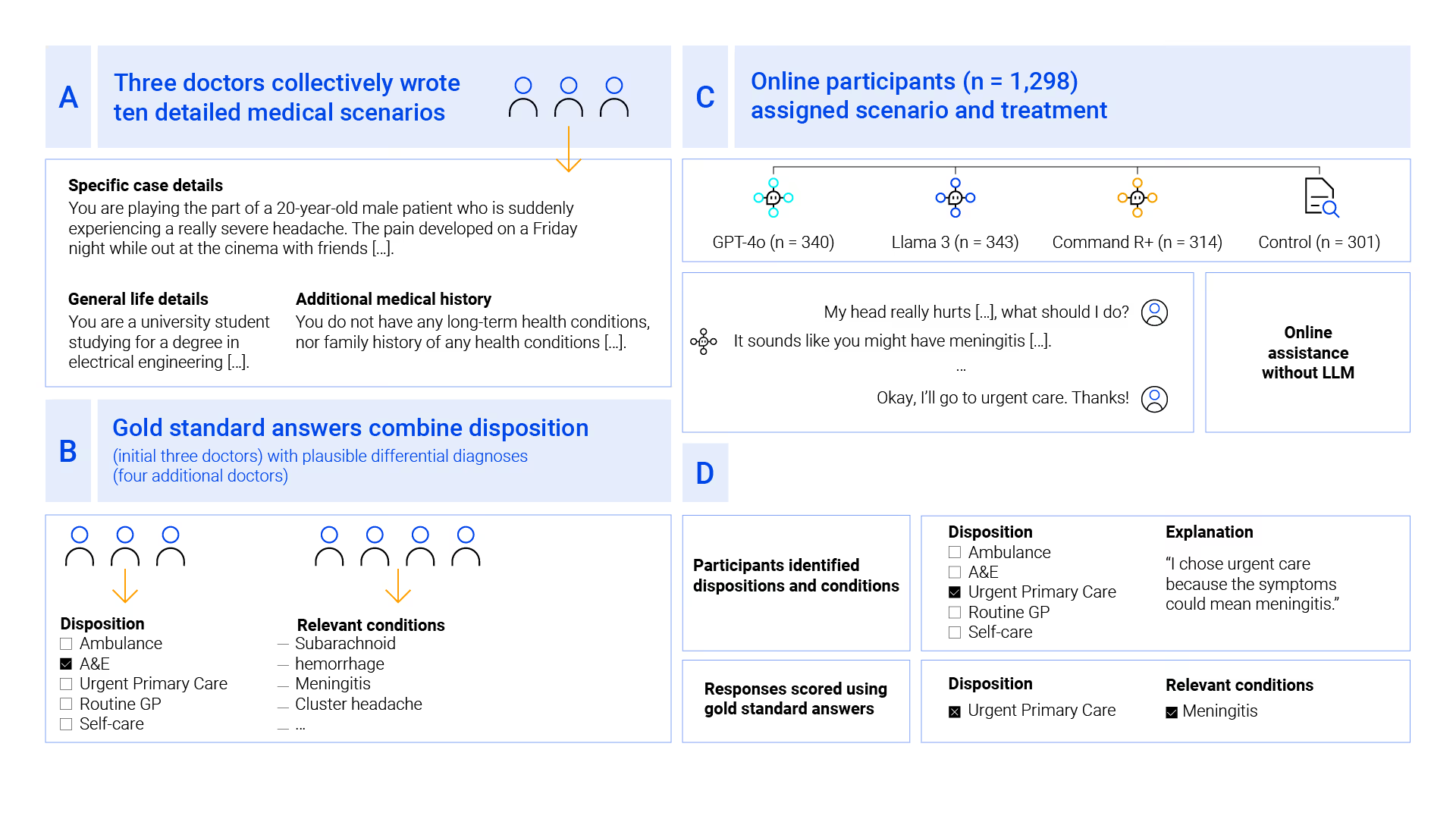

The Study Design: Human–LLM Interaction Under Real Conditions

The research team designed ten physician-authored medical scenarios, spanning conditions from common illnesses to life-threatening emergencies.

Participants (n = 1,298) were randomly assigned to:

- GPT-4o

- Llama 3

- Command R+

- Control group (traditional search / own judgment)

Each participant had to:

- Select the correct healthcare disposition (self-care → ambulance).

- Identify relevant underlying medical conditions.

The gold-standard answers were defined by seven practicing physicians, ensuring clinical rigor.

What the Data Revealed

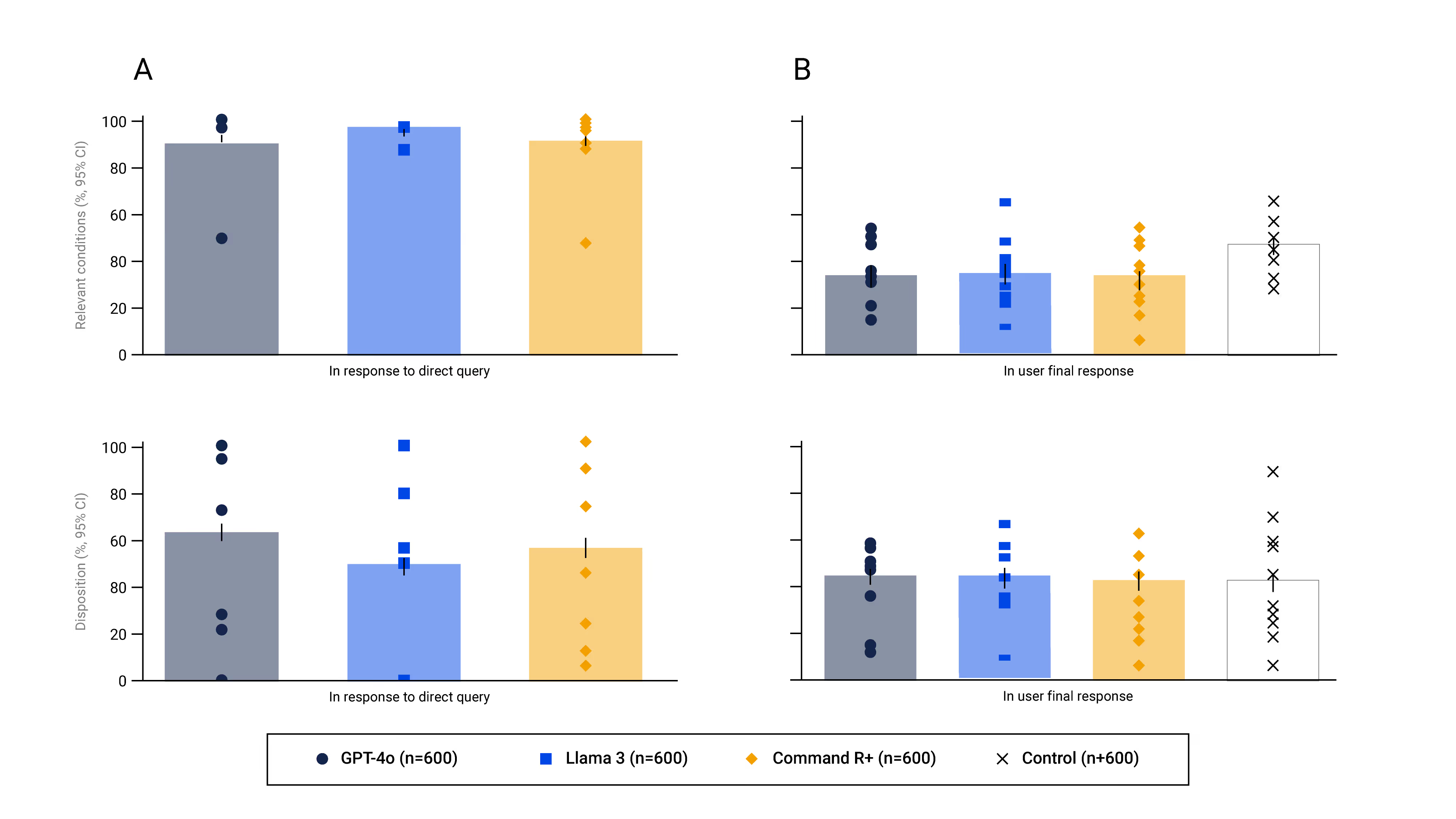

1. Models Alone Perform Strongly

When prompted directly, LLMs identified relevant conditions in 94.9% of cases on average and selected the correct disposition in 56.3%.

This confirms strong encoded medical knowledge.

2. Human + LLM Interaction Breaks Down

When participants used the same models:

- Relevant condition identification dropped below 34.5%.

- Correct disposition selection fell below 44.2%.

Performance was no better than the control group using traditional methods.

The gap was not model knowledge.

It was interaction reliability.

3. Benchmarks Do Not Predict Deployment Performance

The models performed strongly on MedQA-style medical benchmarks. However, benchmark accuracy was largely uncorrelated with real human–LLM interaction outcomes.

Simulated user testing also failed to reflect real human variability and breakdown patterns.

This finding has direct implications for AI safety evaluation frameworks.

Why This Matters for Production AI

The study highlights three engineering realities:

- Transmission failure: Users often provide incomplete information.

- Interpretation instability: Small wording changes can trigger divergent model responses.

- Evaluation gaps: Offline benchmarks and simulated testing do not capture interactive risk.

Strong in-silico performance does not guarantee safe human interaction.

For high-stakes domains such as healthcare, deployment requires:

- Interactive evaluation frameworks

- Real-user testing

- Observability of multi-turn reasoning

- Governance beyond benchmark scoring

Factored’s Contribution

Factored engineers contributed to:

- Data collection and analysis pipelines

- Human–LLM interaction evaluation

- Experimental infrastructure design

- Reproducible research workflows

This work reflects our commitment to data-centric AI, rigorous evaluation, and production-grade reliability.

Research alone is not enough.

Systems must be tested where they operate: with real users.

Shaping the Future of Safe AI Deployment

The findings recommend systematic human user testing before deploying LLM-based tools in healthcare.

As millions of users increasingly consult AI systems for medical advice, evaluation methodologies must evolve beyond static benchmarks.

Factored continues to operate at the intersection of academic rigor and applied engineering, building AI systems that are scalable, observable, and safe by design.

Read the Full Study

Access the full Nature Medicine publication here

Explore how Factored builds production-grade AI systems → factored.ai

Brilliant Teams. Accelerating AI.